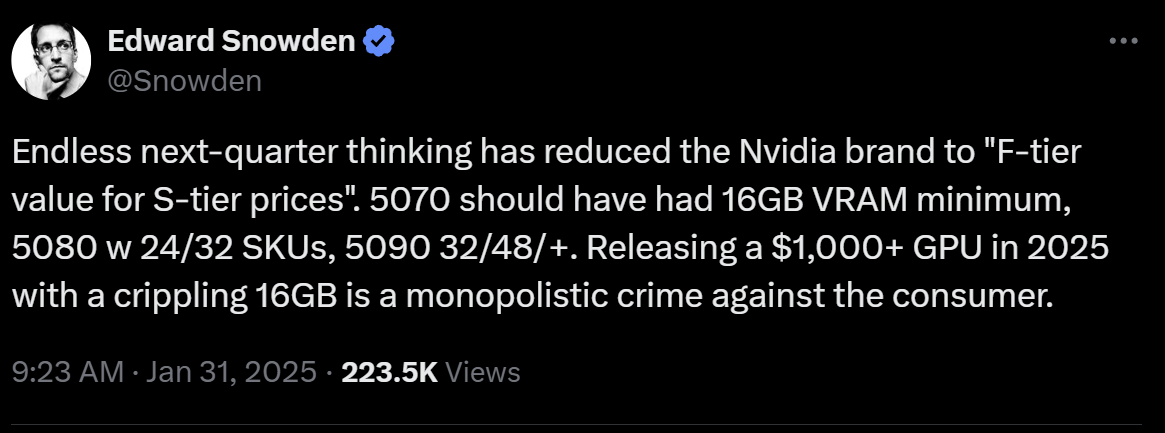

Vote with your wallets. DLSS and Ray Tracing aren’t worth it to support this garbage.

Dlss and RT are great… But this Gen definitely sucks. Just get a 4080 (if they’re cheaper)

Ray tracing is a money grab.

Ray tracing is pretty nice. You get real, sharp reflections and can see around the corners, giving a minor gameplay benefit that could be utilized. I’m rather of opinion that 4k is a money crab. All it does is give you no tangible benefits and makes you upgrade your gpu while everything runs like you have a 970 on 1080p. More hz is better than more res.

Totally agree about 4k, it useful for work (its like 4x 1080 screens!) but for gaming it’s so much overkill.

Lol wut? No it’s not. That’s a ridiculous thing to say. Properly implemented RT is gorgeous and worlds ahead of rasterized lighting. Sure, some games have shit RT, but RT in general is not a money grab. That’s a dumb thing to say

Game engines don’t have to simulate sound pressure bouncing off surfaces to get good audio. They don’t have to simulate all the atoms in objects to get good physics. There’s no reason to have to simulate photons to get good lighting. This is a way to lower engine dev costs and push that cost onto the consumer.

Game engines don’t have to simulate sound pressure bouncing off surfaces to get good audio.

Sure, but imitating good audio takes a lot of work. Just look at Escape From Tarkov that has replaced its audio component twice? in 5 years and the output is only getting worse. I imagine if they could have an audio component that simulates audio in a more realistic way with miminal performance hit compared to the current solutions I think they’d absolutely use it instead of having to go over thousands of occlusion zones just to get a “good enough”.

They don’t have to simulate all the atoms in objects to get good physics.

If it meant it solves all physics interactions I imagine developers would love it. During Totk development Nintendo spent over a year only on physics. Imagine if all their could be solved simply by putting in some physics rules. It would be a huge save on development time.

There’s no reason to have to simulate photons to get good lighting.

I might be misremembering but I’m pretty sure raytracing can’t reenact the double slit experiment because it’s not actually simulating photons. It is simulating light in a more realistic way and it’s going to make lighting the scenes much easier.

This is a way to lower engine dev costs and push that cost onto the consumer.

The only downside of raytracing is the performance cost. But that argument we could’ve used in the early 90s against 3d engines as well. Eventually the tech will mature and raytracing will become the norm. If you argued they Raytracing is a money grab at this very moment I’d agree. The tech isn’t quite there yet, but I imagine within the next decade it will be. However you’re presenting raytracing as something useless and that’s just disingenuous.

Ray tracing is a conceptually lazy and computationally expensive. Fire off as many rays as you can in every direction from every light source, when the ray hits something it gets lit up and fires off more rays of lower intensity and maybe a different colour.

Sure you can optimize things by having a maximum number of bounces or a maximum distance each ray can travel but all that does is decrease the quality of your lighting. An abstracted model can be optimized like crazy BUT it take a lot of man power (paid hours) and doesn’t directly translate to revenue for the publisher.

The only downside of raytracing is the performance cost.

The downside is the wallet cost. Spreading the development cost of making a better conventional lighting system over thousands of copies of a game is negligible, requiring ray tracing hardware is an extra 500-1000 bucks that could otherwise be spent on games.

The downside is the wallet cost.

The wallet cost is tied to the performance cost. Once the tech matures companies will start competing over pricing and “the wallet cost” comes down. The rest of what you’re saying is just you repeating yourself. And now I also have to repeat myself.

If you argued they Raytracing is a money grab at this very moment I’d agree. The tech isn’t quite there yet, but I imagine within the next decade it will be. However you’re presenting raytracing as something useless and that’s just disingenuous.

There’s no reason to argue over the now, I agree that right now raytracing really isn’t worth it. But if you’re going to continue arguing that raytracing will never be worth it you better come up with better arguments.

Or you know buy an AMD card and quit giving your money to the objectively worse company.

For the people looking to upgrade: always check first the used market in your area. It is quite obvious for now the best thing to do is just try to get 40 series from the drones that must have the 50 series

I have never had a gpu, I want 4k120 so which one should I get?

Not sure if in your area is a thing, but a 4090 second hand at a decent price should do it

amd exists, people.

please put your money where your mouth is please?

Not at the higher end, it doesn’t.

Have you seen nvidia 5 series? AMD is accidentally higher end now.

Yeah, they suck, but they at least exist, AMD flat out said they’re not even gonna try anymore.

dunno about this generation cause im not in the market for new parts, but amd usually comes within spitting distance at much lower prices.

it makes less and less sense to buy nvidia as time passes, yet people still do.

Well, that’s just not true. I’ve been using AMD exclusively for 10 years now and they haven’t had a proper competitor to NVIDIA’s high end in since then. I’m willing to settle for less performance to avoid a shitty company but some people aren’t. It can’t make “less and less sense” to go with NVIDIA if they’re the only ones making the product you want to buy. More importantly, though: it doesn’t matter. The point is that people wanting performance above a 4080 are screwed because NVIDIA shat the bed with the 5000 series and AMD just doesn’t exist at that price point so the comment I’m replying to makes no sense.

The crushing majority of people are not spending $3000 on a graphics card. Chasing the fastest for the sake of it is something most people are not doing.

All other pricepoints are covered, and slightly better served on average at that by AMD.

Welp. That’s another lie. Until very recently, if you wanted performance over NVIDIA’s 60-tier on AMD, your only options were the Vega 56, 64 or the Radeon VII, which were all trash. It wasn’t until the 6000 series that AMD was able to come close to NVIDIA’s 80-tier and they’ve come out and said they’re not doing that anymore, so we have a grand total of TWO high end Radeon cards. Your assertion that AMD has covered every price point below $3000 is pure fantasy.

you took a specific period where, yes, AMD was struggling.

my assertion is correct because we are not at that time anymore.

Did you miss the part where I pointed out AMD said they were gonna stop trying with high end? We were barely out of it for 2 generations and now we’re right back into it.

Very few people actually need or more make use of the power that nvidia’s high end cards provide

I’ve got the feeling that GPU development is plateauing, new flagships are consuming an immense amount of power and the sizes are humongous. I do give DLSS, Local-AI and similar technologies the benefit of doubt but is just not there yet. GPUs should be more efficient and improve in other ways.

Just like I rode my 1080ti for a long time it looks like I’ll be running my 3080 for awhile lol.

I hope in a few years when I’m actually ready to upgrade that the GPU market isn’t so dire… All signs are pointing to no unfortunately.

Same here.

Only upgraded when my 1080 died, so I snagged a 3080 for an OK price. Not buying a new card untill this one dies. Nvidia can get bent.

Maybe team red next time….

I’m extremely petty and swore off Nvidia for turning off my shadow play unless I made an account for my 660, had to downgrade my driver to get it back without an account. I only have an Nvidia card now because I got a 3080ti for free after my rx 580 died.

Fuck yeah man, I love that conviction. If only everyone were so inclined, the world would be a better place.

I’m still riding my 1080ti…

1080ti has had the longest useful lifespan of any GPU ever.

Im still running mine but Im likely to buy a 5090 very soon as Im making the leap to 4k gaming and vr from 2k.

The 7900 XTX is looking like a pretty good option for a fraction of the price. I think i might go that direction. 24GB also helps future proof it a bit.

I had a 7900xtx briefly but a fan died and they refunded the card rather than fix the fan.

They haven’t really been available since then due to stock issues.

It definitely seemed like the better option than the 4080.

lol this reminds me of whatever that card was back in the 2000’s or so, where you could literally make a trace with a pencil to upgrade the version lower to the version higher.

I barely remember this anymore but the downgrade had certain things deactivated. Something like my card had four “pipelines” and the high-end one had eight, so a minor hardware modification could reactivate them. It was risky though, because often imperfections came out of the manufacturing process, and then they would just deactivate the problem areas and turn it into a lower-end version.

After a little while, someone put out drivers that could simulate the modification without physically touching the card. You’d read about softmod and hardmod for the lower-end radeon cards.

I used the softmod and 90% of the time it worked perfectly, but there was definitely an issue where some textures in certain games would have weird artifacting in a checkerboard pattern. If I disabled the softmod the artifacting wouldn’t happen.

Wow, it looks like it is really a 5060 and not even a 5070. Nvidia definitely shit the bed on this one.